![PDF] Explaining the unsuitability of the kappa coefficient in the assessment and comparison of the accuracy of thematic maps obtained by image classification | Semantic Scholar PDF] Explaining the unsuitability of the kappa coefficient in the assessment and comparison of the accuracy of thematic maps obtained by image classification | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/31589e2ee1daaf23a836cfbfe61ec52e1f249075/12-Table1-1.png)

PDF] Explaining the unsuitability of the kappa coefficient in the assessment and comparison of the accuracy of thematic maps obtained by image classification | Semantic Scholar

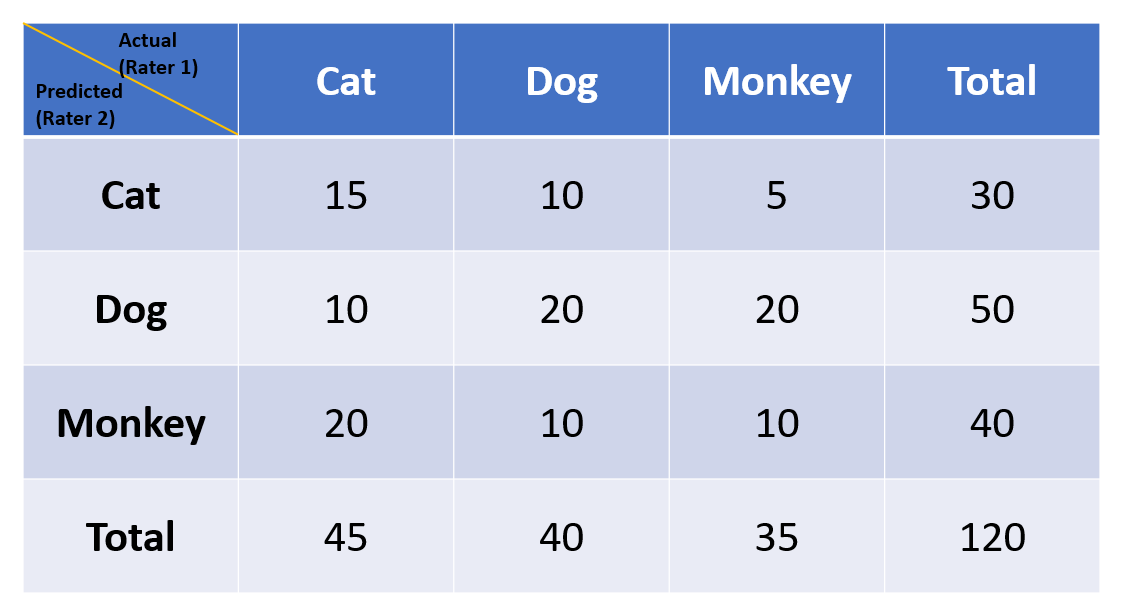

Multi-Class Metrics Made Simple, Part III: the Kappa Score (aka Cohen's Kappa Coefficient) | by Boaz Shmueli | Towards Data Science

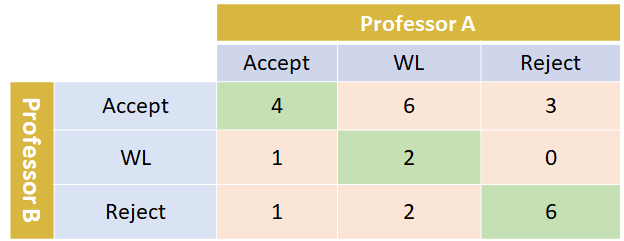

Comparison of Cohen's Kappa and Gwet's AC1 with a mass shooting classification index: A study of rater uncertainty

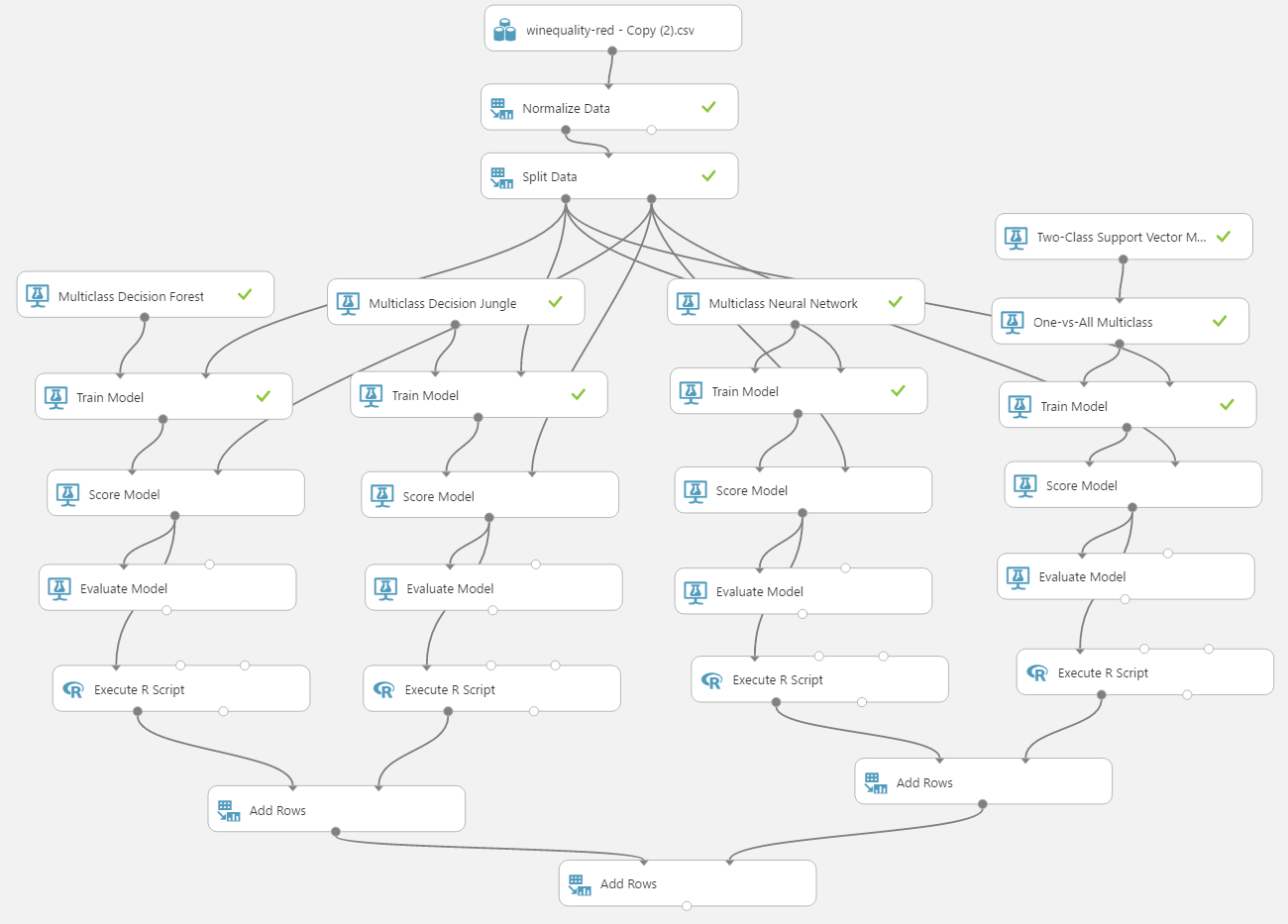

Accuracy versus Kappa for different Classification Models to Predict Wine Quality | Azure AI Gallery

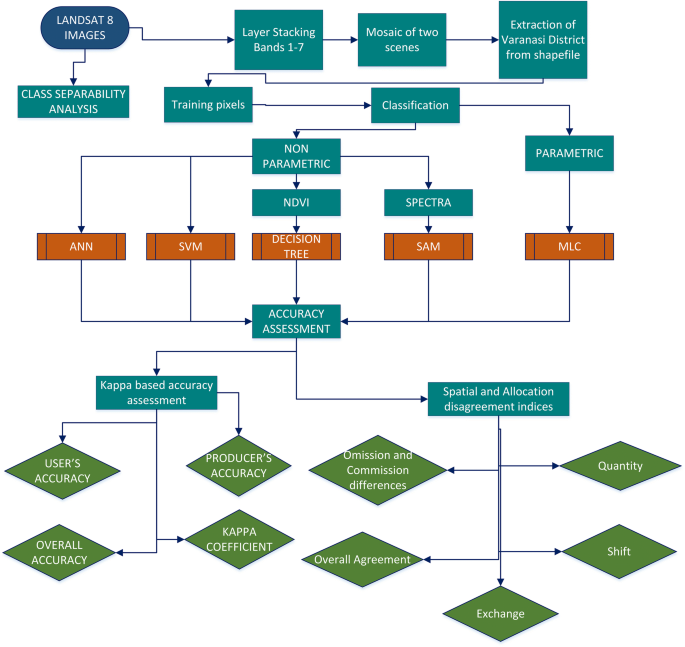

Appraisal of kappa-based metrics and disagreement indices of accuracy assessment for parametric and nonparametric techniques used in LULC classification and change detection | SpringerLink

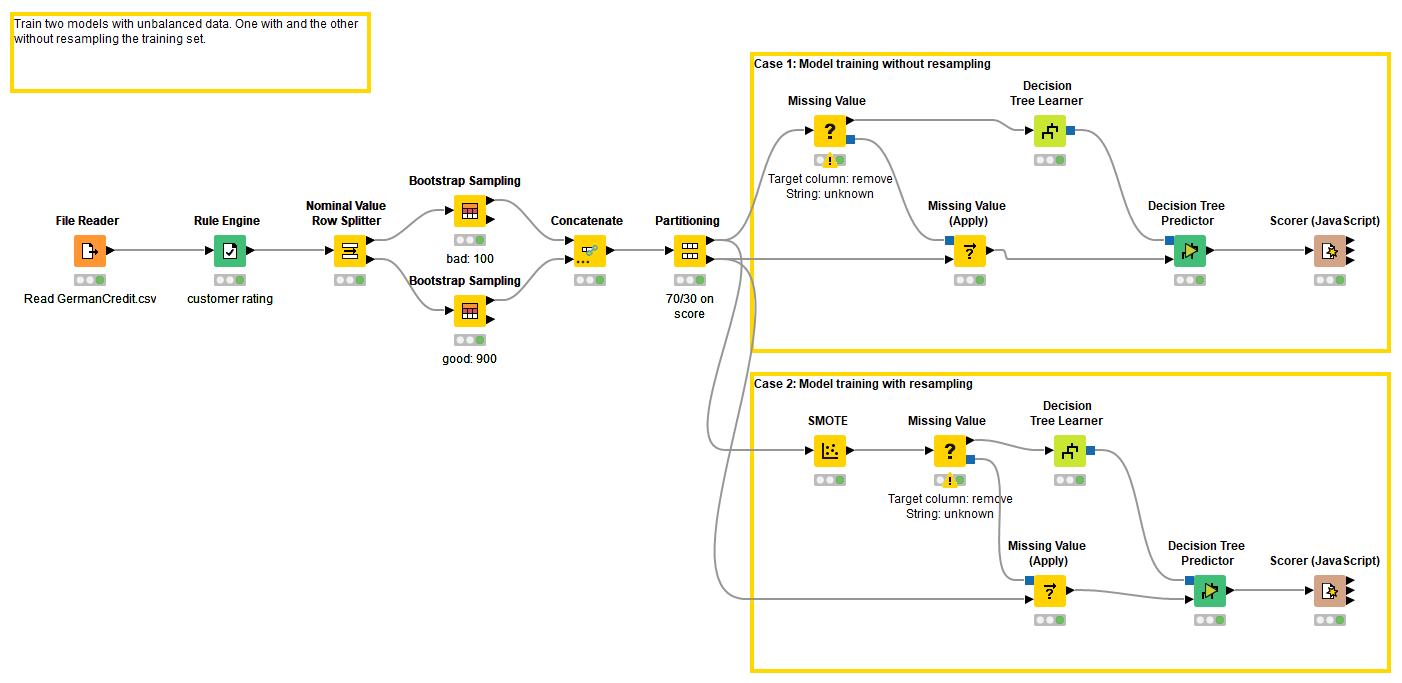

Metrics to evaluate classification models with R codes: Confusion Matrix, Sensitivity, Specificity, Cohen's Kappa Value, Mcnemar's Test - Data Science Vidhya